About

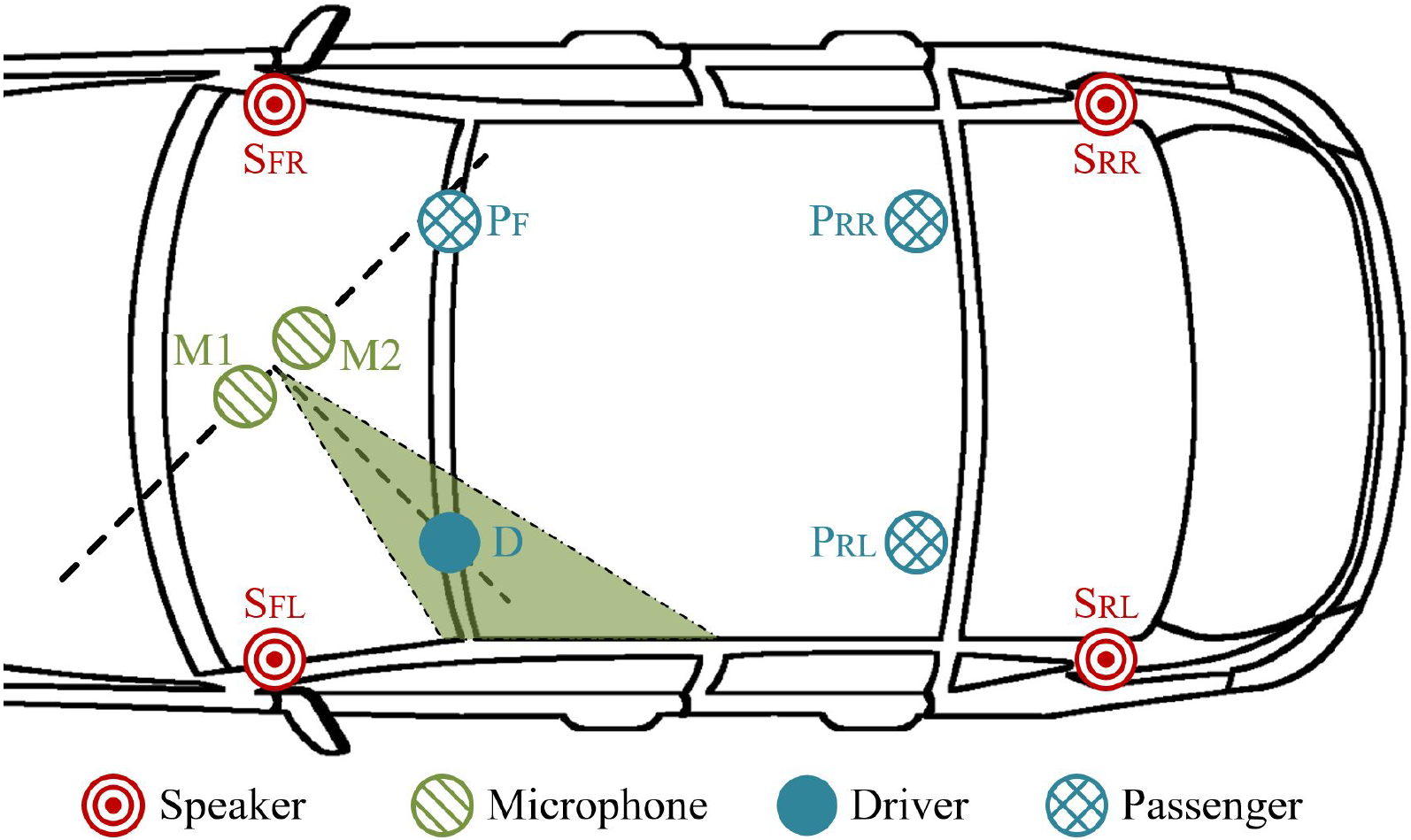

Driverless vehicles are becoming an irreversible trend in our daily lives, and humans can interact with cars through invehicle voice control systems. However, the automatic speech recognition (ASR) module in the voice control systems is vulnerable to adversarial voice commands, which may cause unexpected behaviors or even accidents in driverless cars. Due to the high demand on security insurance, it remains as a challenge to defend in-vehicle ASR systems against adversarial voice commands from various sources in a noisy driving environment. In our paper, we develop a secure in-vehicle ASR system called SIEVE, which can effectively distinguish voice commands issued from the driver, passengers, or electronic speakers in three steps. First, it filters out multiple-source voice commands from multiple vehicle speakers by leveraging an autocorrelation analysis. Second, it identifies if a single-source voice command is from humans or electronic speakers using a novel dual-domain detection method. Finally, it leverages the directions of voice sources to distinguish the voice of the driver from those of the passengers. We implement a prototype of SIEVE and perform a real-world study under different driving conditions. Experimental results show SIEVE can defeat various adversarial voice commands over in-vehicle ASR systems.

Published in the 23rd International Symposium on Research in Attacks, Intrusions and Defenses (RAID) 2020.

Download the Paper Slides Code Export Citation

@inproceedings {wang2020sieve,

author = {Shu Wang and Jiahao Cao and Kun Sun and Qi Li},

title = {{SIEVE}: Secure In-Vehicle Automatic Speech Recognition Systems},

booktitle = {23rd International Symposium on Research in Attacks, Intrusions and Defenses ({RAID} 2020)},

year = {2020},

isbn = {978-1-939133-18-2},

address = {San Sebastian},

pages = {365--379},

url = {https://www.usenix.org/conference/raid2020/presentation/wang-shu},

publisher = {{USENIX} Association},

month = oct,

}

Team

The SIEVE system was developed by the following academic researchers:

Acknowledgments

This work is partially supported by the U.S. ARO grant W911NF-17-1-0447, U.S. ONR grant N00014-18-2893, and NSFC grant 61572278.